For the last few years, I’ve been using Quadra Hosting for my web hosting needs. Their servers are great, with good speed and connection, and they’re reasonably priced. They also have the best customer and technical support that I’ve ever seen, bar none. I highly recommend them if you’re looking for a new web host.

Let’s Encrypt is a project that has set out to ensure that all web traffic is encrypted. To that end, they have changed the traditional process of obtaining SSL keys to a much simpler one, and provide them for free.

Also, Chrome will soon be warning users anytime they visit an unencrypted website. With these two factors, I thought I’d set about trying to install a Let’s Encrypt key onto my Quadra hosting account. As you can see from the green lock in the address bar for this site, you can see that I was successful!

Let’s Encrypt’s preferred method of installation is via CertBot client that runs on the server, and installs and renews the keys. However, a quick check with the technical support team at Quadra, and I realised that this wasn’t going to work on shared hosting, where I don’t have low-enough level access to the server.

Fortunately, Let’s Encrypt and others have provided a variety of different ways to install some keys. They have scripts for a variety of different languages. One that I came across was a bash script that can run on shared hosting without root access. It’s simply called acme.sh. It worked really well.

Here’s the process that I went through to set up Let’s Encrypt with the acme.sh script.

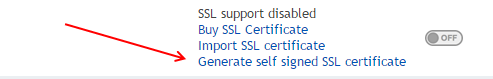

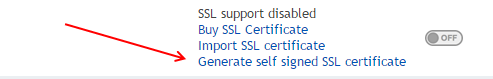

- Go to your Quadra Hosting control panel, and turn on SSL connections, by going to ‘Domain Settings’, then ‘SSL’ and then clicking the ‘Generate self signed SSL certificate’.

Click ‘submit’ on the next page, then just ignore the page of keys that comes up after that. You won’t be using those keys, but it has activated the SSL functionality for the server for your domain.

2. Next you’ll need to install the acme.sh script. To do this, and most of the rest of the steps, you’ll need shell access. It’s not turned on by default, so you’ll need to fill out the shell access request form and send it through to the Quadra Hosting support team. Then, when you’re logged on, enter

curl https://get.acme.sh | sh

This will download and install the acme script. At this time, it tries to install an alias to acme.sh, but it failed for me. I had to manually edit the .bashrc file and added in the following line:

alias acme.sh='~/.acme.sh/acme.sh'

This can be done simply by entering the following line

printf "alias acme.sh=\'~/.acme.sh/acme.sh\'" >> .bashrc

then reload your bashrc by either logging out / in, or by typing

source ~/.bashrc

3. With the script set up, you can now download and install your SSL key. The first step is done with the ‘issue’ command:

acme.sh --issue -d systematictechnology.net -d www.systematictechnology.net -w ~/systematictechnology.net/

This contacts the Let’s Encrypt servers and will generate some SSL keys, and copies them to the acme install directory. It’ll give the location of these new certificates:

[Thu Nov 10 01:46:53 GMT 2016] Your cert is in /hsphere/local/home/xxxx/.acme.sh/systematictechnology.net/systematictechnology.net.cer

[Thu Nov 10 01:46:53 GMT 2016] Your cert key is in /hsphere/local/home/xxxx/.acme.sh/systematictechnology.net/systematictechnology.net.key

[Thu Nov 10 01:46:53 GMT 2016] The intermediate CA cert is in /hsphere/local/home/xxxx/.acme.sh/systematictechnology.net/ca.cer

[Thu Nov 10 01:46:53 GMT 2016] And the full chain certs is there: /hsphere/local/home/xxxx/.acme.sh/systematictechnology.net/fullchain.cer

4. Next up, you need to install the newly created certificates. This is done in two ways. Firstly, through the control panel, then through the command line. This is so that both Quadra and the script know the certificates.

Firstly, log onto the server with an FTP client and download or view the four certificates which were listed in the key creation message (above).

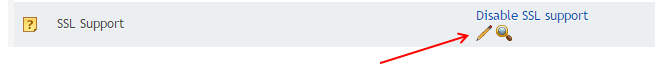

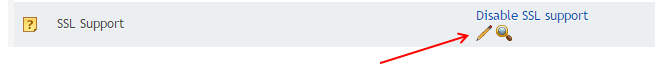

On the control panel, go to ‘web options’ then select ‘edit SSL support’.

This will bring up a page with some areas in which you can paste in the keys. Put in:

- Install yoursite.key to ‘Certificate key’

- Install yoursite.cer to ‘Certificate file’

- Install ca.cer to ‘Certificate Chain file’

The Certificate Key and Certificate file can be uploaded at the same time, but the Certificate Chain File will need to be uploaded separately.

Once that is done, go back to the command line. You’ll need to change the permissions on the ssl key folder:

chmod 0600 ~/ssl.conf/systematictechnology.net/*

Install the keys again using the acme script:

acme.sh --installcert -d systematictechnology.net --certpath ~/ssl.conf/systematictechnology.net/server.crt --keypath ~/ssl.conf/systematictechnology.net/server.key --capath ~/ssl.conf/systematictechnology.net/ca.crt

Note that this will throw a few errors: The script will also try and install some backup keys, which it won’t be able to do. I don’t consider this a problem, as the keys primary location is in the acme.sh directory anyway.

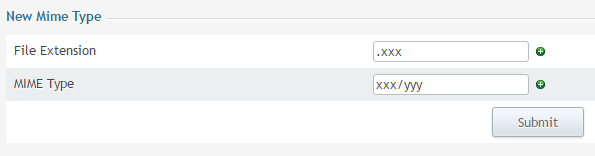

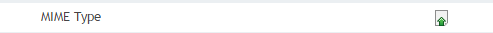

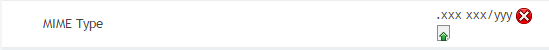

5. Reboot your apache instance to reload the new keys. There is no direct to do this, but the Quadra support team suggested a simple workaround. Go to Web Options for your domain in the control panel, then click on the ‘Mime Type’ button.

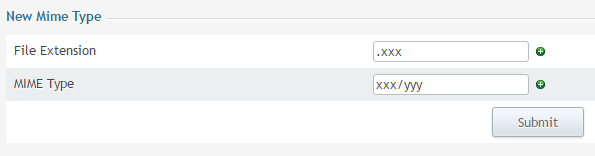

On the window that pops up, enter some dummy data, hit ‘submit’

On the window that pops up, enter some dummy data, hit ‘submit’

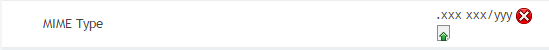

Then click on Save / Apply in the Web Options window. Wait a few minutes, then click on the red ‘X’ next to the new Mime type. This will delete the new entry.

Click Save / Apply again. This will reboot the apache instance, and load up the new keys.

6. Test the installed certificate. Wait for five minutes for the server to reboot, then go to https://yoursite.com . If all the steps above have worked then it should be working nicely. Next, check that the cron job to renew the certificates has installed correctly. This should have been done when the acme.sh script was installed. Check it now.

This can be done through either the control panel (go home -> tools -> cron) or through the command line:

crontab -l

You can also go to SSL Lab’s SSL test to see that everything is working correctly.

7. Celebrate! You’ve now got SSL working on your site, celebrate that you’re doing your small bit to make the whole web a bit more secure.

Addendum:

After I posted this article, I sent a link through to Quadra support with a note along the lines of ‘here’s a guide I made up, please feel free to send it to any other customers who might be trying to do the same thing.’

I shortly got a response from the support team. The team member who’d been helping me with this setup not only responded and corrected some mistakes I’d made, but he also wrote: “You could also mention that we would be happy to do all this for them if they asked us to, e.g. if they are not used to shell commands.”

This surprised me no end. The Quadra Hosting team sell a one-click SSL implementation, which only costs $30, and requires no technical skills at all. Rather than suggest “You could also mention that we have a cheap one-click SSL implementation if they are having difficulty with Let’s Encrypt.”, they instead suggest that they could forgo making that money, and instead spend time help you implement a no-cost alternative.

If you’re not with Quadra yet for your hosting, change. This is just a small example of how good they are.